Conversational Analytics in Gemini BigQuery: How to "Talk" to your Data Warehouse in practice? (Webinar Summary)

⏱️ Czas czytania: ok. 8 minut

Our recent webinar on modern analytics attracted a large number of participants, and the volume of questions made it clear just how interested you are in the topic. The traditional model, in which every data request goes into a long queue with analysts, is no longer effective in the fast-paced world of AI.

Implementing solutions based on conversational analytics allows companies to respond more quickly to market changes.

Inspired by your activity and the most common doubts, we have prepared this article. We expand on the topics discussed during the meeting, focusing on how conversational analytics based on Gemini and BigQuery truly changes the game in accessing information.

Introduction to Gemini and BigQuery

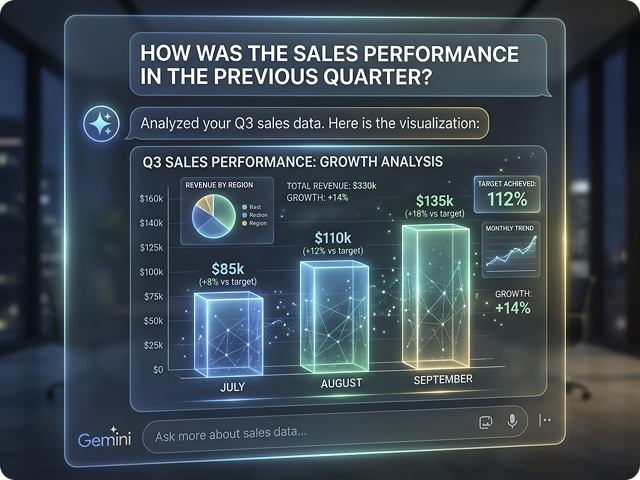

Companies are generating data faster than ever before, which is why they need tools that allow them not only to collect information, but above all to use it effectively. This is where Gemini comes in an advanced artificial intelligence (AI) technology developed by Google. Gemini isn’t just another AI model; it’s a true breakthrough in how users can interact with data.

Thanks to Gemini, it is possible to perform complex tasks and generate outputs in natural language. This means that users no longer need to know complicated query languages or coding - it is enough to ask a question as we speak every day. A second emerging definition of conversational analytics is the ability to query business data using natural language instead of writing a complex SQL query. This is a huge change for business, which can respond faster to market needs and make decisions based on up-to-date data.

The combination of Gemini with BigQuery, one of Google’s most powerful analytical tools for processing and analyzing large data sets, opens up completely new possibilities. Google AI models, such as Gemini, can be enabled and managed within the Google Cloud console to enhance data analysis and machine learning capabilities. Users can analyze, explore, and report data intuitively, directly in an environment that guarantees scalability and enterprise-level security. This solution allows companies to move from traditional, time-consuming processes to modern, conversational analytics based on AI – faster, simpler, and more effectively than ever before.

Why is natural language processing in Conversational Analytics a breakthrough for companies?

The problem of today’s companies is not the lack of data but difficulties with its quick interpretation. Conversational analytics removes the technological barrier, allowing dialogue with data systems in natural language. Additionally, conversational analytics enables automating repetitive tasks and streamlining team activities, which translates into time savings and greater business efficiency.

Key benefits:

- Data democratization in practice: Marketing (CMO), sales, or HR directors gain full independence. They can ask about “customer LTV from the last quarter” or “causes of retention decline” without knowing SQL.

- Dramatic shortening of Time-to-Value: Instead of waiting days for a report, the answer appears in seconds. This allows immediate drill-down and real-time reaction to anomalies. Users can instantly see the results of their queries and make decisions faster than ever before.

- A new role for Data teams: Engineers are relieved from repetitive tasks. They can focus on analyzing data patterns and preparing recommendations for the business. They can concentrate on building advanced ML models and optimizing architecture instead of repeatedly pulling the same Excel reports.

In summary, various types of content and data can be analyzed to tailor solutions to customer needs in business, with understanding customer as a key outcome of conversational analytics. Conversational analytics provides invaluable insights that help businesses understand customers, identify trends, and transform unstructured conversations into structured data for actionable Voice of the Customer insights, enabling better use of conversational analytics and achieving measurable results.

Why do AI Projects in analytics often fail? (key risks)

Implementing conversational analytics seems simple “on paper,” but the devil is in the engineering details. Understanding each step of the implementation is key to project success. Here are the most common pitfalls that can cause a project not to deliver the expected value:

- 1. Lack of trust in data (Silos)

If sales and marketing departments use different definitions of “active customer,” the data agent will provide conflicting information. AI will not fix data chaos – it only highlights it. Proper team training helps avoid interpretative errors. Success requires prior consolidation of sources in a single data warehouse (Single Source of Truth).

- 2. Skipping the semantic layer

An agent is as smart as the instructions it receives. Without so-called Golden Queries (model SQL queries) and a clearly defined semantic layer, the Gemini model may wrongly interpret relationships between tables. This is the most common cause of AI “hallucinations” in results. Precise configuration options help reduce the risk of incorrect outputs. AI agents work best when their role, personality, and communication style are clearly defined, along with specific instructions and tools they can use.

- 3. Neglecting Data Governance and security

Deploying AI at an enterprise scale requires strict access control. Every user should see only the data they are authorized to access (Document Level ACL). Secure architecture inside Vertex AI ensures that company data does not leak to public Gemini models. Configuration commands and detailed permission settings are essential for maintaining security.

- 4. Resistance to cultural change

Moving from “my favorite Excel” to a central conversational system requires change management. People must trust the system, and that trust is built through transparency – the system must be able to explain how it arrived at a given result. Clear definition of implementation goals facilitates acceptance of new solutions by the team.

Multiple AI Agents in Data Engineering – automating platform building

During the second part of the webinar, Mariusz Czopiński from Google demonstrated that the role of artificial intelligence goes far beyond simple answering business questions. We saw AI Agents in action, becoming real support for data engineers by taking over the most tedious and repetitive technical processes. Agent technology enables AI agents to autonomously perform tasks, complete tasks, and handle routine tasks, going beyond basic programming to actively pursue goals and manage complex workflows.

This is a new approach to automation within Google Cloud, changing how we think about infrastructure creation: building advanced AI agents requires specialized knowledge, access to appropriate tools, and large data sets, which is a challenge for many organizations.

- Natural language instead of tedious coding: An AI agent can independently design and create entire data pipelines (ETL pipelines) based on your description. Instead of manually configuring each connection, you describe the goal, and the technology handles the rest. The automatic code generation feature can be integrated with studio tools like Looker Studio or Dataform, significantly streamlining the analytics solution creation process.

- Intelligent data cleaning: Mariusz showed how agents handle Data Quality challenges. The system can autonomously deduplicate records and ensure consistency of information within BigQuery. AI agents can interact with external systems to gather data and enhance their perception. Integration with VS Code enables developers to quickly deploy fixes directly in the IDE environment, accelerating the entire creation and maintenance process.

- Automatic modeling (e.g., Star Schema): This was one of the most interesting demo points. We showed how, using natural language, advanced data models like star schemas can be built. The agent understands relationships between tables and can organize them into a structure optimized for efficient analytics. A practical example of AI agents’ use is analyzing email messages to detect spam – the agent analyzes message content, recognizes patterns, and automatically filters unwanted emails.

As a result, this is not just “faster reporting” but primarily drastic acceleration of data platform building. What used to require weeks of engineering team work on designing schemas and flows can today be created in a fraction of that time thanks to AI agents in Google Cloud. Organizations can deploy AI agents on serverless platforms like Cloud Run to operationalize complex single- or multi-agent systems efficiently. The next step may be expanding the platform with website management or further dedicated application development, allowing even fuller use of conversational analytics potential. AI agents improve over time by learning from past interactions and can facilitate transactions and business processes.

Enterprise-Class security for Customer Data

For many webinar participants, data security was a key issue. Using solutions like Vertex AI, your company data is completely isolated and protected from external access. It is not used for training public Gemini models, which guarantees full control over confidentiality. The entire operation takes place in your secure cloud environment, with strict access permissions such as Document Level ACL, allowing precise definition of who has access to which data.

Thanks to this solution, companies can safely use advanced conversational analytics features, confident that their business data is protected at enterprise-class levels. Such security architecture is crucial for deployments in environments with high data protection requirements, e.g., in the financial or medical sectors.

Challenges and limitations of Conversational Analytics

While conversational analytics offers significant advantages, it also presents several challenges and limitations that businesses must address to achieve optimal results. One of the primary hurdles is ensuring high data quality—since the effectiveness of conversational analytics depends on accurate, comprehensive customer interaction data. Incomplete or inconsistent data can lead to misleading insights and suboptimal decision making.

Another challenge lies in the complexity of human language. Conversational analytics tools must interpret not only the words used but also the context, tone, and intent behind them. Ambiguities, slang, and cultural nuances can make it difficult for AI systems to accurately analyze customer conversations, especially when relying solely on automated processes.

Integrating conversational analytics with existing business processes and systems can also be resource-intensive. Achieving seamless integration often requires significant investment in both technology and change management. However, advancements in large language models (LLMs) and other AI technologies are helping to bridge these gaps, improving the accuracy and adaptability of conversational analytics solutions.

To overcome these challenges, businesses should prioritize robust data collection, invest in advanced AI tools, and develop clear strategies for integrating conversational analytics into their broader business processes.

Q&A from the Webinar: the most interesting questions about Conversational Analytics

Many specific questions were asked during the meeting. We selected those that best illustrate the challenges companies face when planning conversational analytics implementation:

1. How to prepare data so AI knows what to interpret as, for example, “profitability”?

The key is the semantic layer. AI needs metadata – precise descriptions of columns in BigQuery and provision of so-called Golden Queries. These are model SQL queries that teach the model how specific KPIs are calculated in your company context. Thanks to this, AI does not guess but uses verified business logic.

2. What is the cost of using this solution? Is it only data transfer?

The cost model usually includes two main components: data processing in BigQuery (standard query costs) and the Gemini language model costs in Vertex AI (token fees, i.e., length of queries and responses). It is worth noting that conversational analytics optimizes human labor costs, which is the main ROI element.

3. Does the Agent have access to the entire data set or limited datasets?

You decide. Integration with Google Cloud allows precise permission control (IAM). The agent can be limited to specific datasets, tables, or even rows. Thanks to this, an HR department user will never access financial data if they are not authorized.

4. How do agents handle dozens or hundreds of heavy tables?

For very complex structures, metadata filtering is used, or several specialized agents are created (e.g., logistics agent, sales agent). The agent first analyzes metadata to identify only tables relevant to the question, which avoids chaos and errors.

5. Can the agent be configured to stick only to specific topics and not “talk” about anything else?

Yes, this is a key enterprise-class security element. Thanks to so-called system prompts and grounding mechanisms, we can strictly define the agent’s knowledge scope. User requests serve as prompts or inputs that trigger the AI agent to perform tasks, respond with information, or execute actions. If a user asks about the weather or topics unrelated to business data, the agent politely informs that its role is limited to analyzing specific BigQuery areas. This prevents “function dilution” of the model and increases user trust.

6. If I tell the agent what charts I need on the dashboard, will it design an optimized data model itself?

This is one of the most powerful AI agents’ functions in data engineering. The agent can analyze your visualization needs and suggest the best table structure (e.g., creating appropriate joins or aggregations). It can even assess whether it is better to prepare one common data source for two different charts or two separate ones for faster and more efficient dashboard operation.

7. How to create your own custom agent under a specific link for employees?

In the Google Cloud environment (Vertex AI), dedicated agent applications can be built. Such an agent can be embedded inside a company portal, integrated with messengers (e.g., Google Chat or Slack), or offered as a separate web application under a dedicated address. Thanks to the API, the agent becomes an integral part of your tool ecosystem, not just a hidden function in the admin console.

8. When in the project should the semantic model for conversational analytics be created?

The semantic model is a foundation that should be created right after data integration and basic structure building in BigQuery. We do not wait for this until reporting tools (like Looker or Tableau) are created. Building the semantic model earlier creates a “common language” for AI and people, ensuring that every conversational query from the beginning is based on the same business definitions.

Summary: Your data is waiting to talk

The future of analytics is not static charts but dynamic dialogue. Companies that implement conversational mechanisms faster will gain a huge operational advantage. As our webinar and numerous questions from business practitioners showed, the market is already ready for this change.

Want to build solid AI foundations in your organization? Contact us. We will help you move from data silos to intelligent conversational analytics that truly supports your business.